An Automatic Finite-Sample Robustness Check: Can Dropping a Little Data Change Conclusions?

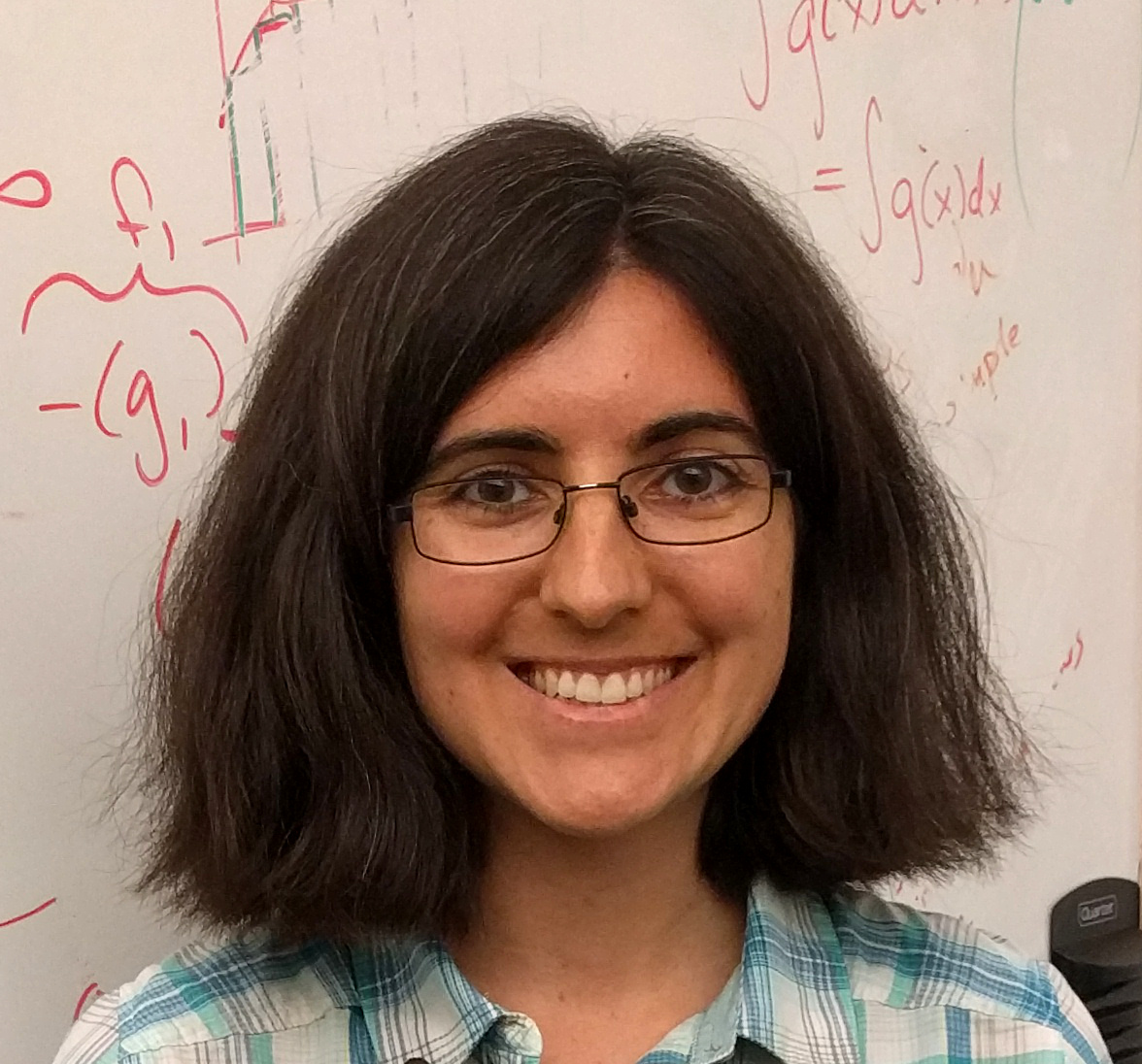

Presenter: Tamara Broderick (Massachusetts Institute of Technology)

Title: An Automatic Finite-Sample Robustness Check: Can Dropping a Little Data Change Conclusions?

Date/Time: Thursday, June 20th, 9:30 - 11:00 AM

Abstract: Practitioners will often analyze a data sample with the goal of applying any conclusions to a new population. For instance, if economists conclude microcredit is effective at alleviating poverty based on observed data, policymakers might decide to distribute microcredit in other locations or future years. Typically, the original data is not a perfect random sample from the population where policy is applied – but researchers might feel comfortable generalizing anyway so long as deviations from random sampling are small, and the corresponding impact on conclusions is small as well. Conversely, researchers might worry if a very small proportion of the data sample was instrumental to the original conclusion. So we propose a method to assess the sensitivity of conclusions to the removal of a very small fraction of the data set. Manually checking all small data subsets is computationally infeasible, so we propose an approximation based on the classical influence function. Our method is automatically computable for common estimators. We provide error bounds on approximation performance and a low-cost exact lower bound on sensitivity. We find that sensitivity is driven by a signal-to-noise ratio in the inference problem, does not disappear as data accrues, and is not decided by misspecification. Empirically we find that many data analyses are robust, but the conclusions of several influential economics papers can be changed by removing (much) less than 1% of the data.

Biography: Tamara Broderick is an Associate Professor with tenure in the Department of Electrical Engineering and Computer Science at MIT. She is a member of the MIT Laboratory for Information and Decision Systems (LIDS), the MIT Statistics and Data Science Center, and the Institute for Data, Systems, and Society (IDSS). She completed her Ph.D. in Statistics at the University of California, Berkeley in 2014. Previously, she received an AB in Mathematics from Princeton University (2007), a Master of Advanced Study for completion of Part III of the Mathematical Tripos from the University of Cambridge (2008), an MPhil by research in Physics from the University of Cambridge (2009), and an MS in Computer Science from the University of California, Berkeley (2013). Her recent research has focused on fast, easy-to-use, and reliable methods for quantifying uncertainty and robustness. She has been awarded designation as an IMS Fellow (2024), selection to the COPSS Leadership Academy (2021), an Early Career Grant (ECG) from the Office of Naval Research (2020), an AISTATS Notable Paper Award (2019), an NSF CAREER Award (2018), a Sloan Research Fellowship (2018), an Army Research Office Young Investigator Program (YIP) award (2017), Google Faculty Research Awards, an Amazon Research Award, the ISBA Lifetime Members Junior Researcher Award, the Savage Award (for an outstanding doctoral dissertation in Bayesian theory and methods), the Evelyn Fix Memorial Medal and Citation (for the Ph.D. student on the Berkeley campus showing the greatest promise in statistical research), the Berkeley Fellowship, an NSF Graduate Research Fellowship, a Marshall Scholarship, and the Phi Beta Kappa Prize (for the graduating Princeton senior with the highest academic average).